Call And Response, Bringing Sound To Life

Our First Exhibition

Call and Response is an interactive art project created in collaboration by Ainsley Wagoner, Andrew Allred, and myself.

We recently displayed version 1.0 of Call and Response in the Lexington Art League's Expanding Fields Exhibition. It was a blast to be part of this exhibition with so many other great artists.

The general idea for Call and Response was to create meaningful visual feedback in response to audio queues. We weren't thinking specific speech commands but broader aspects of communication such as speaking vs singing or clapping. We sought to establish a dialogue between the projected canvas and the participant.

Behavior

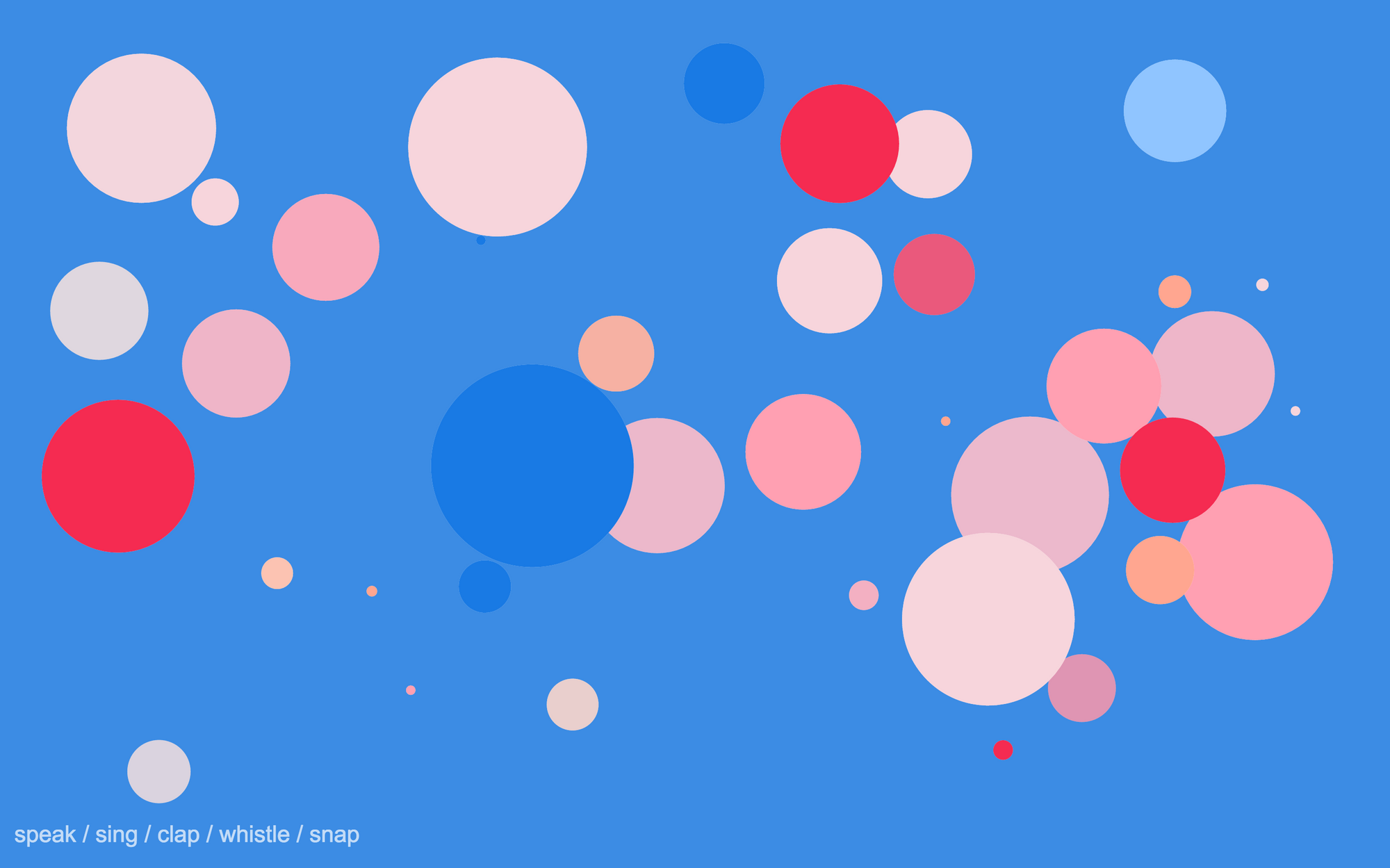

In this iteration of Call and Response, when the exhibit detects no sound or only noise, all you see is a field of tiny dots, that move very slightly in a random walk. They fade randomly from white to an assortment of colors.

The dots are randomly distributed, but never placed too close together, creating an even distribution across the screen.

When the exhibit detects that someone is speaking into the microphone, the dots stop moving and assume a white background. They begin growing. Each dot has a growth rate randomly chosen within a range. As long as someone is speaking the dot will continue to grow at that rate. Each of the dots has a maximum size chosen randomly within a range, when that size is reached it pops! That is, it is repositioned on the field and restored to its original size. There is a chance with any given pop that new dot will also be created and placed in the field.

If the participant begins to sing colors are overlaid on the dots. The opacity of the color increases as singing continues.

There are multiple color schemes to the exhibit. Percussive sounds such as clapping cause the themes to cycle.

Implementation

This project is implemented using the Electron platform. This is the first project I've done with Electron, but I'm no strange to NodeJS or JavaScript. Thanks to the power of the Web Audio API javascript offers a surprisingly good platform for this type of project.

We do no use many external Java Script dependencies. We considered using processing early in the project but most of what we needed was avialbe through the JavaScript Web Audio API and just basic ES6 goodness.

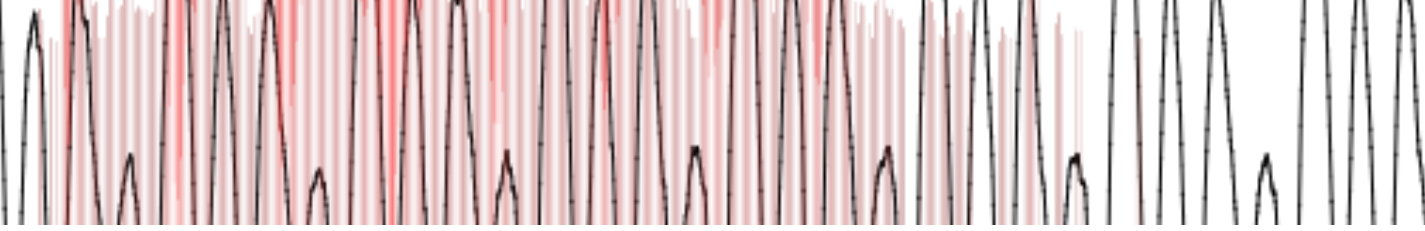

For the speech detection I implemented Katz algorithm and applied it to the audio's time domain data to compute a rough aproximation of the fractal dimension.

The fractal dimension of 2D time series data like can be thought of as an indicator of how rough or smooth a curve is. This has been used to detect speech pathology; so, I thought it might be able to help us detect the difference between random background noise or nonsense vocalization and speech.

Through trial and error I derived a range for the fractal dimension which we recognize as speech. It is a bit finicky and had to be honed for the microphone, but it works!

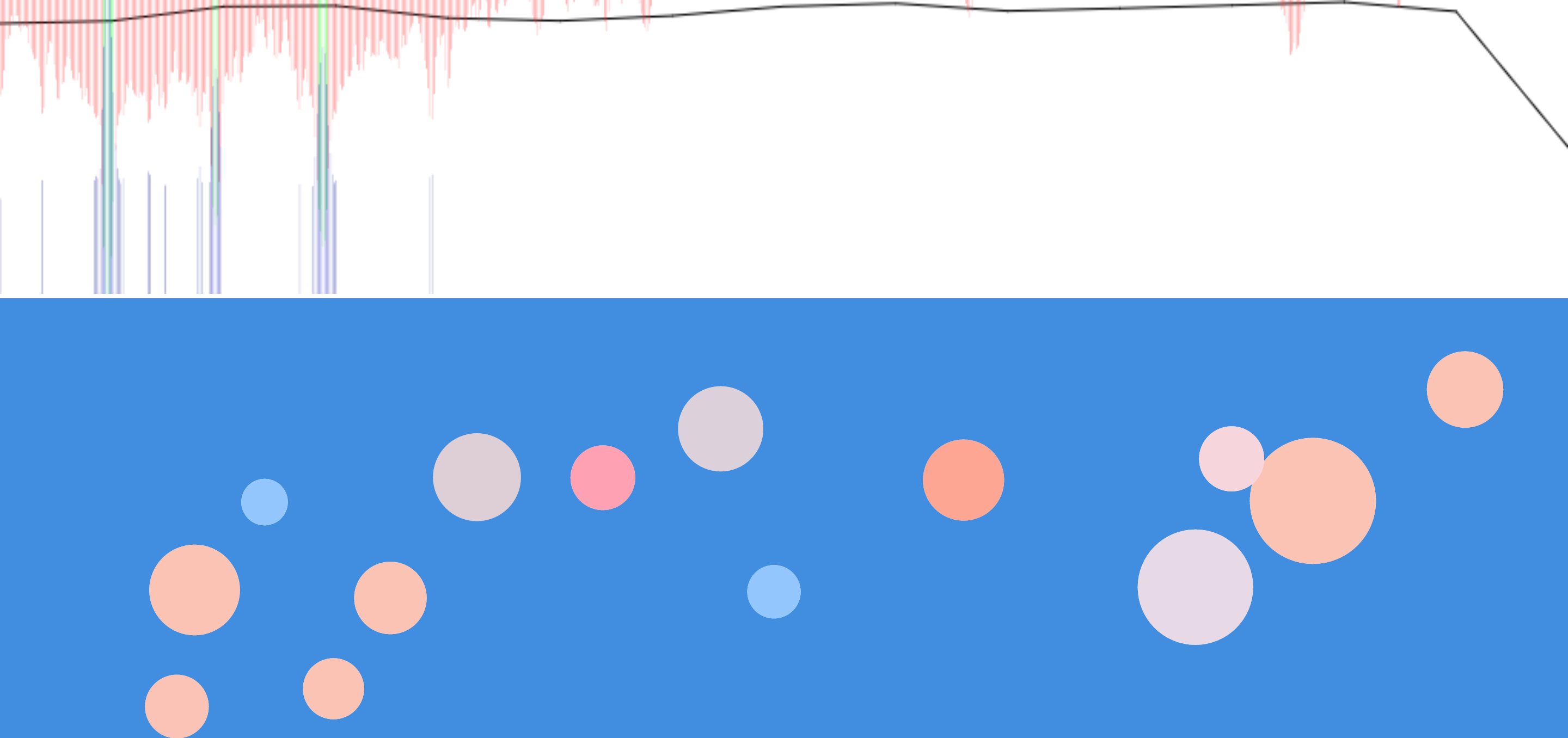

Singing detection proved a bit more challenging. To achieve this Andrew makes use of the fast fourier transform. When only noise or plain speech is present the frequencies tend to be fairly distributed, but when singing is present you notice small discrete clusters of frequencies (the three spikes visible below).

For clap detection we just look for dramatic changes in the time domain data. This is the simplest state to test for.

In both singing and speech detection false positives are an issue. Sometimes it was pretty easy to understand why there was a false positive, for example, stretching out a word while talking looks very much like singing for a brief period. We smooth the effects of false positives by using a visual feedback method that relies on growth and color transition rates. This means that the effects of the detection are cumulative over time. A brief false positive for speech results in little visual change. Only when speech is detected over a sustained period of time does the effect become pronounced.

Going Forward

We hope to continue to improve and present this piece in other galleries and settings. Already Ainsley has made use of a modified version in a live musical performance performance (full performance).