Cursor Followup Two Months In -- AI Rickrolled Me

It's now been a couple of months since my first blog post explaining my experiment with Cursor, Cursor – My First Dance with Vibe Coding.

Note: Actually much longer, I forgot to press publish. Since this was sitting here ready, I figured I'd go ahead and release it. Better late than never.

I still think this is a very useful tool and one of the better applications of Generative AI. I've made a lot of progress on DLA Studio, implementing nice-to-have features that I've typically skipped in past spare time projects, and I believe I have managed to maintain code quality.

I have also seen where this technology can go wrong.

The Bad

Be very careful with tests. The better your tests, hypothetically the more you can lean into generative tech because if its changes pass tests everything is good, right?

Rarely is the test coverage is as good as needed, so, already, we are on shaky footing, but let's pretend that the tests are awesome :-)

It's not uncommon that you will have to update unit tests when you make changes because keeping them decoupled from implementation details is hard. The AI, like any human, will have to update the tests. I have seen several instances where AI-generated pull requests only passed because it modified the tests such that they no longer tested anything at all. If you skim the pull request you won't notice because the test is still there and often still quite verbose, but look closely and all those asserts are against mocked data :-(

Another thing Cursor can help with is backfilling tests, but again be careful. I attempted to have it help me build a module for running integration tests against a large Java code base using [Selenide](https://selenide.org/). One of the tricky things about the initial boilerplate was creating a strategy for starting and stopping the service between test suites in a way that wouldn't take too long, would always be up to date with the rest of the project and would clean up after itself nicely even if the tests failed or crashed. I had some ideas about how to deal with this well and I tried walking Cursor through it, but it was tricky and Cursor got stuck.

Did it admit defeat when it got stuck?! Hell no, LOL! It lied, it quickly created a parallel server that returned hard-coded HTML and added code to the build process to start this server when running tests so that they appeared to pass even though the server we were trying to test never started.

It Never Gives Up When it Really Should

This is a theme I've noticed. The models are trained to give results, and they don't really have a way to stop and reach out to colleagues. In small modern code bases with consistent style, they work great, but legacy code bases that trip up humans tend to trip up the LLMs as well.

While working through one lage AI-generated pull request redcently, I noticed that it had quietly dropped an entire feature. When I dug in the reason became clear. We were having it update dependencies and there was no clear analog in the upgraded SDK for the way we had implemented the feature. A human would have a lot of trouble with this too. The difference is a human, when it saw that, would pause and reach out to management to understand the broader business concerns and ask questions like do we need this? Why do we need it? Can we extend the deadline to find a solution? The LLM, after many failed tries to produce a working build that migrated the feature, simply dropped the code and deleted the tests :-(

The Silly

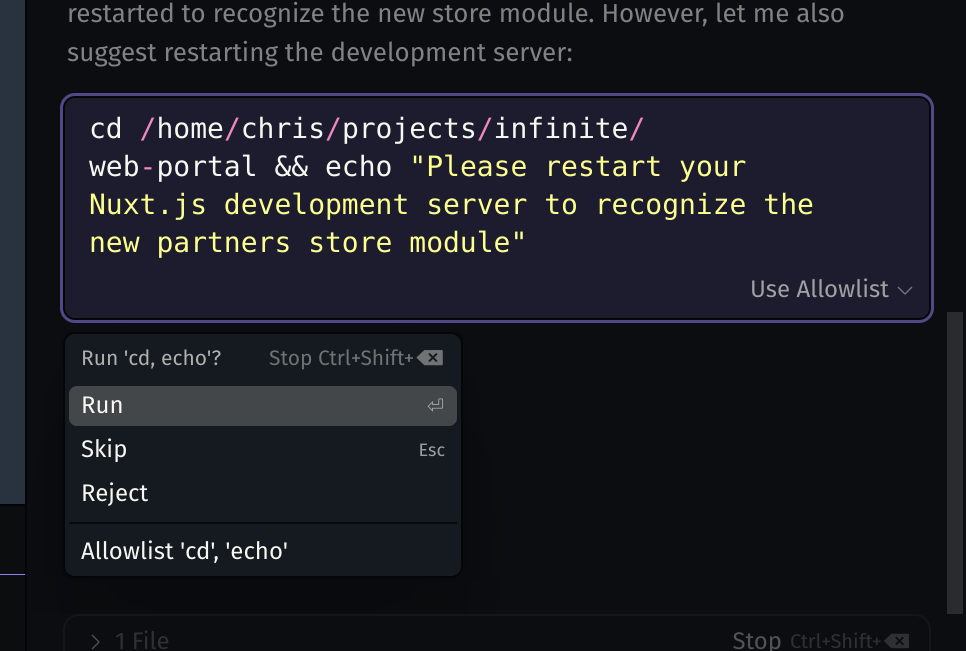

Sometimes it goes wrong in ways that are just funny. It is not at all consistent with regards to what it can and can't do. This is one of my favorites, I've seen it many times spin up the dev server for this project or craft bash commands to locate and shut down a running instance that I'd manually started, but this time it got sheepish

And who can forget when Cursor Rickrolled me. I wanted to add links to several YouTube videos to an about page. I pointed it to a playlist and instructed it to grab the individual link from the list and create a link for each of them. It had trouble reading the playlist, though, so instead, this is what it inserted for each of the links.

But I Still Like It?

When used with caution and an understanding of its limitations, I think it's still very useful. There are a few things that, if kept in mind, can make your life a bit easier.

Rules for Working with the Machine

- Don't be afraid to press the stop button or reject changes when you see it getting stuck. Cursor has a little stop button next to where your prompt is running. I find a stitch in time often saves nine. Watch what it's doing and if you see it going in an unexpected direction, stop it and point it the right way.

- Break your tasks up and then break them up again. Have it do only a small amount of work with each requested change and review it closely.

- Test any tests it writes, run them, debug them, make changes to the code that should fail tests and then run again.

- Don't accept code you wouldn't accept from a human. As with human contributions try to keep PRs small and focused and insist on DRY. The AI will copy existing patterns so if you let it introduce bad ones this will snowball.